Introduction to the Data Centre Cooling Market

The explosive growth of AI, cloud computing, and dense server racks.

The critical role of cooling: Why managing heat is the biggest hurdle for modern data centers.

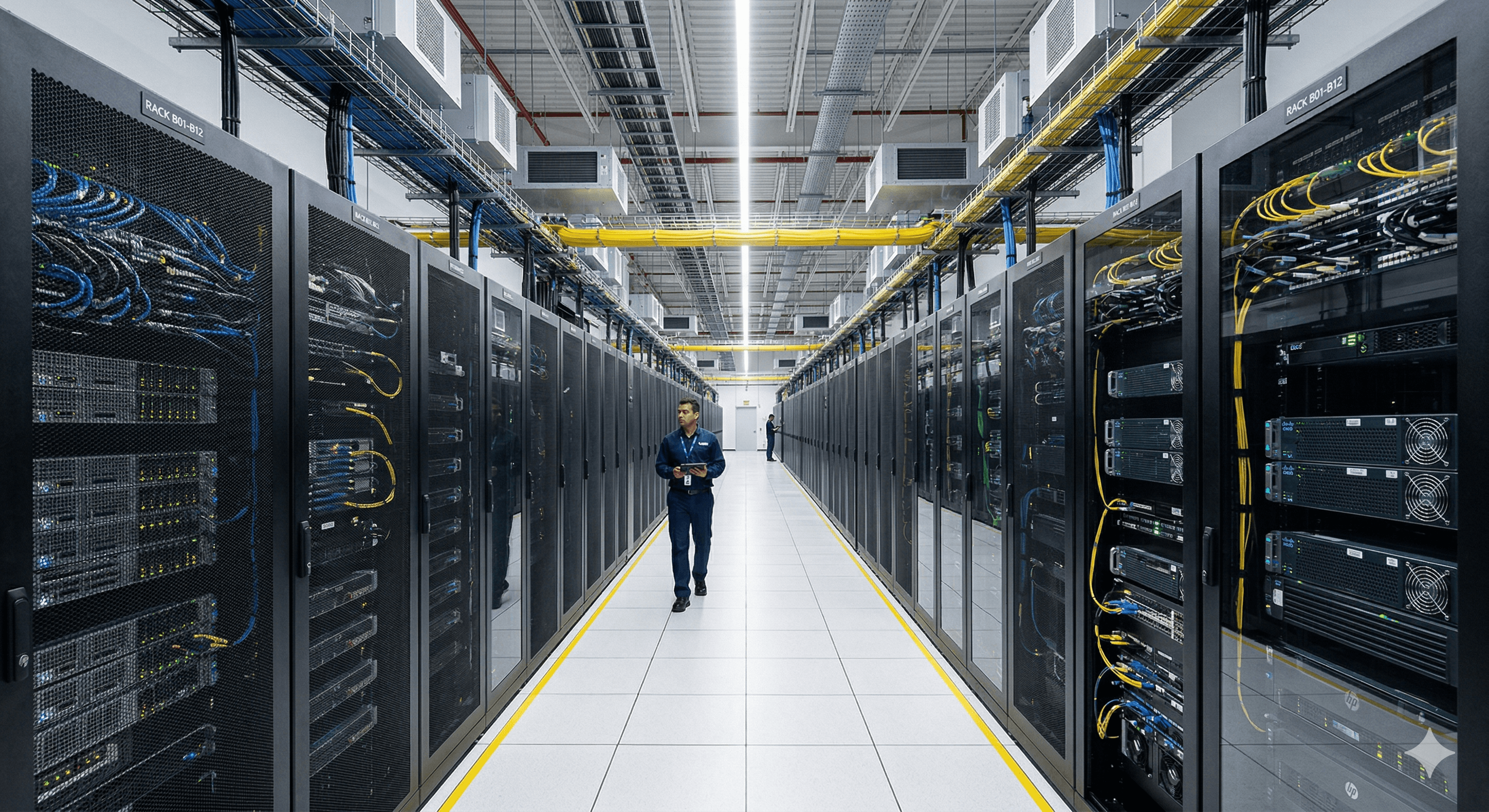

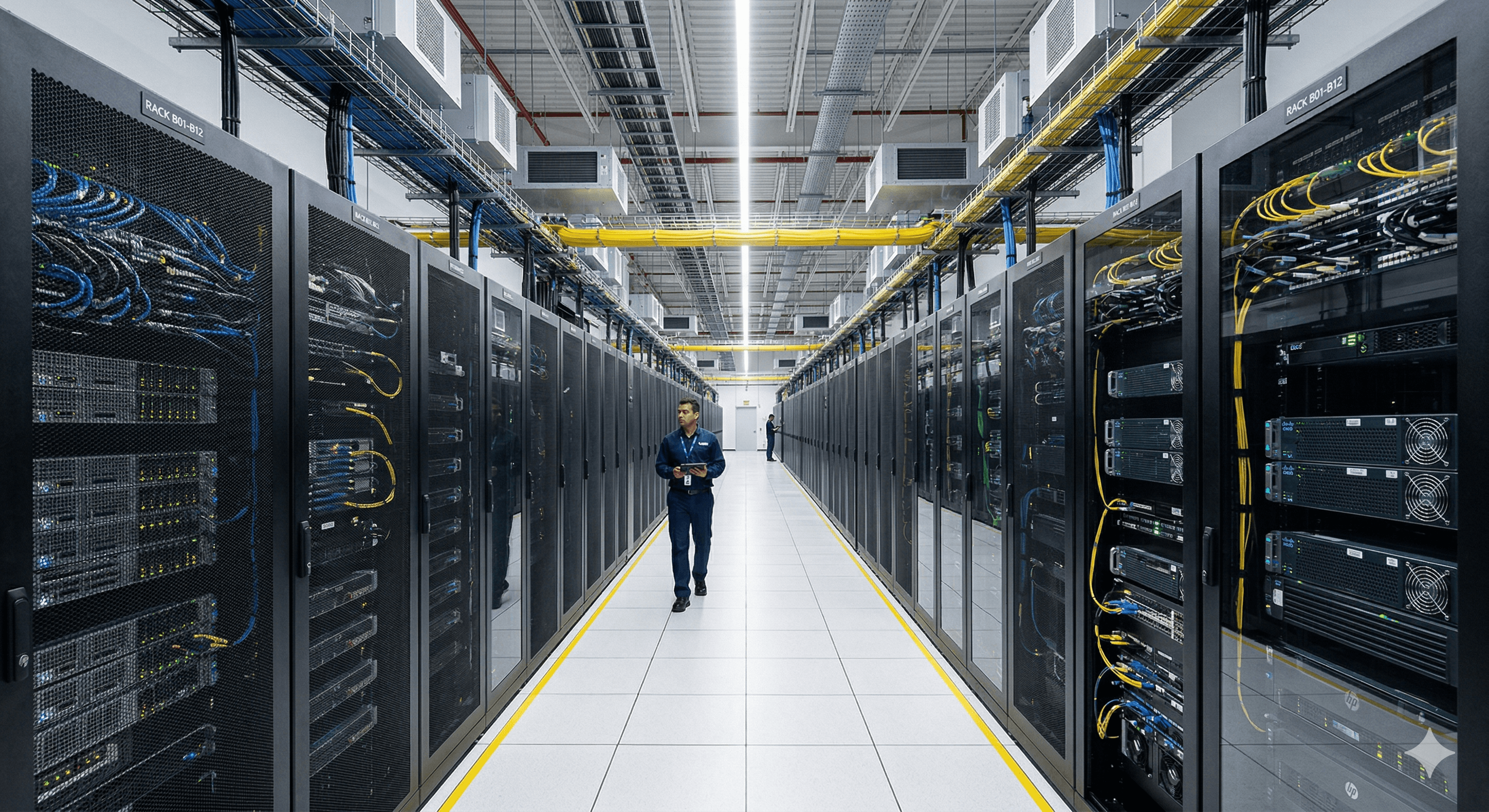

Data centres and how they operate have become a hot topic over the last 5 years due to the rise in AI, and subsequent computing requirements. As with many technological advances the original (and understandable) hype is followed by very real infrastructure and engineering boom. Leading to changes in how the infrastructure is operated and managed. One of these necessary operations is the cooling required to keep the CPUs at the optimal temperature for performance. And as a chemical engineer I was always going to find the fluid the most interesting element…

The Current State of the Cooling Market:

Traditional data centre cooling has long relied on established HVAC systems, which cool the air within the facility and extract heat using open-to-air heat exchangers. This lovely article by the IEA clearly explains how data centre electricity demand is soaring, trending towards 3% of all global electricity demand—a fact neatly demonstrated by that usually exciting, hockey-stick-shaped curve. The base case electricity requirement of 945 TWh by 2030 is almost double Germany's current total electricity demand. Considering most of this energy is ultimately dissipated as heat, it really begs the question: how can we remove and, more importantly, use that heat more efficiently?

Traditional Air Cooling vs. Next-Gen Solutions:

Air Cooling: The legacy standard (CRAC/CRAH units, hot/cold aisles).

For decades, air cooling has been the undisputed workhorse of the data centre industry. Facilities have relied on massive Computer Room Air Conditioning (CRAC) or Air Handler (CRAH) units to blast chilled air through raised floors, using carefully segregated hot and cold aisles to prevent servers from overheating. While this legacy approach is well-understood, relatively easy to maintain, and perfectly adequate for traditional rack densities of 5kW to 10kW, it is simply hitting its physical limits. Air just doesn't have the thermal transfer capacity required to keep up with today's ultra-dense, AI-driven workloads.

Liquid/Fluid Cooling: The emerging necessity for high-density computing (direct-to-chip, immersion).

As rack densities soar past 50kW and even 100kW to support AI, machine learning, and high-performance computing (HPC), the industry is being forced to pivot to liquid cooling.

Unlike air, liquid is an incredibly efficient thermal conductor—capable of capturing and removing heat thousands of times faster.

Today, this transition is largely driven by two primary architectures:

Direct-to-Chip (Cold Plates): This method brings the cooling medium as close to the heat source as possible without the liquid ever touching the electronics. Coolant is pumped through tiny microchannels inside a sealed metal plate that sits directly on top of the hottest components—typically the CPUs and GPUs. As the processors generate heat, the liquid absorbs it and carries it away to a secondary heat exchanger. Direct-to-chip systems are highly effective, capturing roughly 70% to 80% of a server's heat, though the remaining ambient heat still requires supplemental air cooling in the room.

Immersion Cooling (Submerged): This is the ultimate, no-compromise approach to thermal management. Instead of piping liquid to specific chips, entire servers are literally submerged in a tank filled with an engineered, non-conductive (dielectric) fluid. Because the fluid harmlessly touches every single component on the motherboard, it captures 100% of the heat generated by the hardware. As the servers run, the fluid absorbs the heat and is either circulated through a cooling loop (single-phase) or allowed to boil and naturally condense back into liquid (two-phase). This creates a nearly silent, hyper-efficient thermal environment that eliminates the need for server fans altogether.

What’s Driving the Shift?:

Higher TDP (Thermal Design Power) chips

Sustainability goals

Space constraints.

Unlike air, liquid is an incredibly efficient thermal conductor—capable of capturing and removing heat thousands of times faster. Innovations like direct-to-chip cold plates and full-scale immersion cooling are no longer experimental novelties; they are rapidly becoming the baseline necessity for next-generation hardware. By bringing the cooling medium directly to the heat source (the CPU or GPU), fluid cooling not only unlocks maximum processing performance but also drastically reduces the overall energy consumed by the data centre's cooling infrastructure.

Fluid cooling is the future, but it isn't without its growing pains.

Whilst fluid cooling provides a step change in performance it isn't without its growing pains, keep your eyes peeled for our future blog post highlighting some of the areas of concern.

Make sure to follow us here to make sure you get updates on the next post in this series.

The explosive growth of AI, cloud computing, and dense server racks.

The critical role of cooling: Why managing heat is the biggest hurdle for modern data centers.

Data centres and how they operate have become a hot topic over the last 5 years due to the rise in AI, and subsequent computing requirements. As with many technological advances the original (and understandable) hype is followed by very real infrastructure and engineering boom. Leading to changes in how the infrastructure is operated and managed. One of these necessary operations is the cooling required to keep the CPUs at the optimal temperature for performance. And as a chemical engineer I was always going to find the fluid the most interesting element…

The Current State of the Cooling Market:

Traditional data centre cooling has long relied on established HVAC systems, which cool the air within the facility and extract heat using open-to-air heat exchangers. This lovely article by the IEA clearly explains how data centre electricity demand is soaring, trending towards 3% of all global electricity demand—a fact neatly demonstrated by that usually exciting, hockey-stick-shaped curve. The base case electricity requirement of 945 TWh by 2030 is almost double Germany's current total electricity demand. Considering most of this energy is ultimately dissipated as heat, it really begs the question: how can we remove and, more importantly, use that heat more efficiently?

Traditional Air Cooling vs. Next-Gen Solutions:

Air Cooling: The legacy standard (CRAC/CRAH units, hot/cold aisles).

For decades, air cooling has been the undisputed workhorse of the data centre industry. Facilities have relied on massive Computer Room Air Conditioning (CRAC) or Air Handler (CRAH) units to blast chilled air through raised floors, using carefully segregated hot and cold aisles to prevent servers from overheating. While this legacy approach is well-understood, relatively easy to maintain, and perfectly adequate for traditional rack densities of 5kW to 10kW, it is simply hitting its physical limits. Air just doesn't have the thermal transfer capacity required to keep up with today's ultra-dense, AI-driven workloads.

Liquid/Fluid Cooling: The emerging necessity for high-density computing (direct-to-chip, immersion).

As rack densities soar past 50kW and even 100kW to support AI, machine learning, and high-performance computing (HPC), the industry is being forced to pivot to liquid cooling.

Unlike air, liquid is an incredibly efficient thermal conductor—capable of capturing and removing heat thousands of times faster.

Today, this transition is largely driven by two primary architectures:

Direct-to-Chip (Cold Plates): This method brings the cooling medium as close to the heat source as possible without the liquid ever touching the electronics. Coolant is pumped through tiny microchannels inside a sealed metal plate that sits directly on top of the hottest components—typically the CPUs and GPUs. As the processors generate heat, the liquid absorbs it and carries it away to a secondary heat exchanger. Direct-to-chip systems are highly effective, capturing roughly 70% to 80% of a server's heat, though the remaining ambient heat still requires supplemental air cooling in the room.

Immersion Cooling (Submerged): This is the ultimate, no-compromise approach to thermal management. Instead of piping liquid to specific chips, entire servers are literally submerged in a tank filled with an engineered, non-conductive (dielectric) fluid. Because the fluid harmlessly touches every single component on the motherboard, it captures 100% of the heat generated by the hardware. As the servers run, the fluid absorbs the heat and is either circulated through a cooling loop (single-phase) or allowed to boil and naturally condense back into liquid (two-phase). This creates a nearly silent, hyper-efficient thermal environment that eliminates the need for server fans altogether.

What’s Driving the Shift?:

Higher TDP (Thermal Design Power) chips

Sustainability goals

Space constraints.

Unlike air, liquid is an incredibly efficient thermal conductor—capable of capturing and removing heat thousands of times faster. Innovations like direct-to-chip cold plates and full-scale immersion cooling are no longer experimental novelties; they are rapidly becoming the baseline necessity for next-generation hardware. By bringing the cooling medium directly to the heat source (the CPU or GPU), fluid cooling not only unlocks maximum processing performance but also drastically reduces the overall energy consumed by the data centre's cooling infrastructure.

Fluid cooling is the future, but it isn't without its growing pains.

Whilst fluid cooling provides a step change in performance it isn't without its growing pains, keep your eyes peeled for our future blog post highlighting some of the areas of concern.

Make sure to follow us here to make sure you get updates on the next post in this series.

More Works More Works

EXPLORE MORE KEEP READING